MentisOculi Pytorch Path Tracer

- A very simple and small path tracer written in pytorch meant to be run on the GPU

- Why use pytorch and not some other cuda library or shaders? To enable arbitrary automatic differentiation. And because I can.

Features

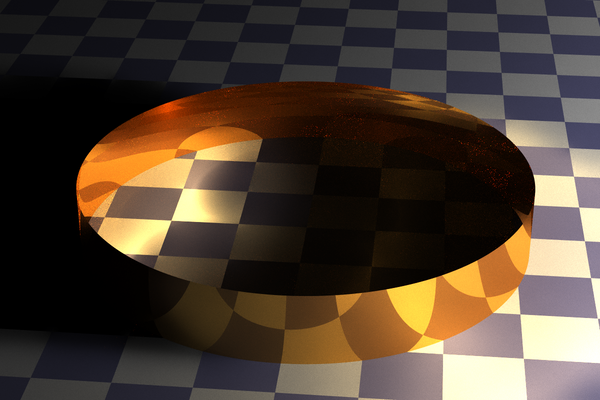

- Can trace reflective spheres and open cylinders with flat and checkered materials.

- Energy Redistribution Path Tracing with transitions limited to rays intersecting same initial diffuse material.

- Backwards (camera to light) path tracing

- Ray permutations in the hypercube of random numbers

- Nearly everything done in large batches on the GPU.

Future Directions

- Langevin Metropolis and HMC both use gradients to increase the efficiency of sampling. This paper outlines how to do these for ray tracing.

- This paper demonstrates how to make ray tracing even more differentiable for the benefit of inverse rendering

- Triangles and a GPU tailored ray acceleration datastructure.

- Metropolis SPPM? With HMC?

Credits

- While the code has been significantly morphed, it was originally a fork James Bowmans' python raytracer

- This was inspired by my ongoing work on secure differentiable programming, specifically adversarial examples in neural networks, at the ETH SRI Lab.